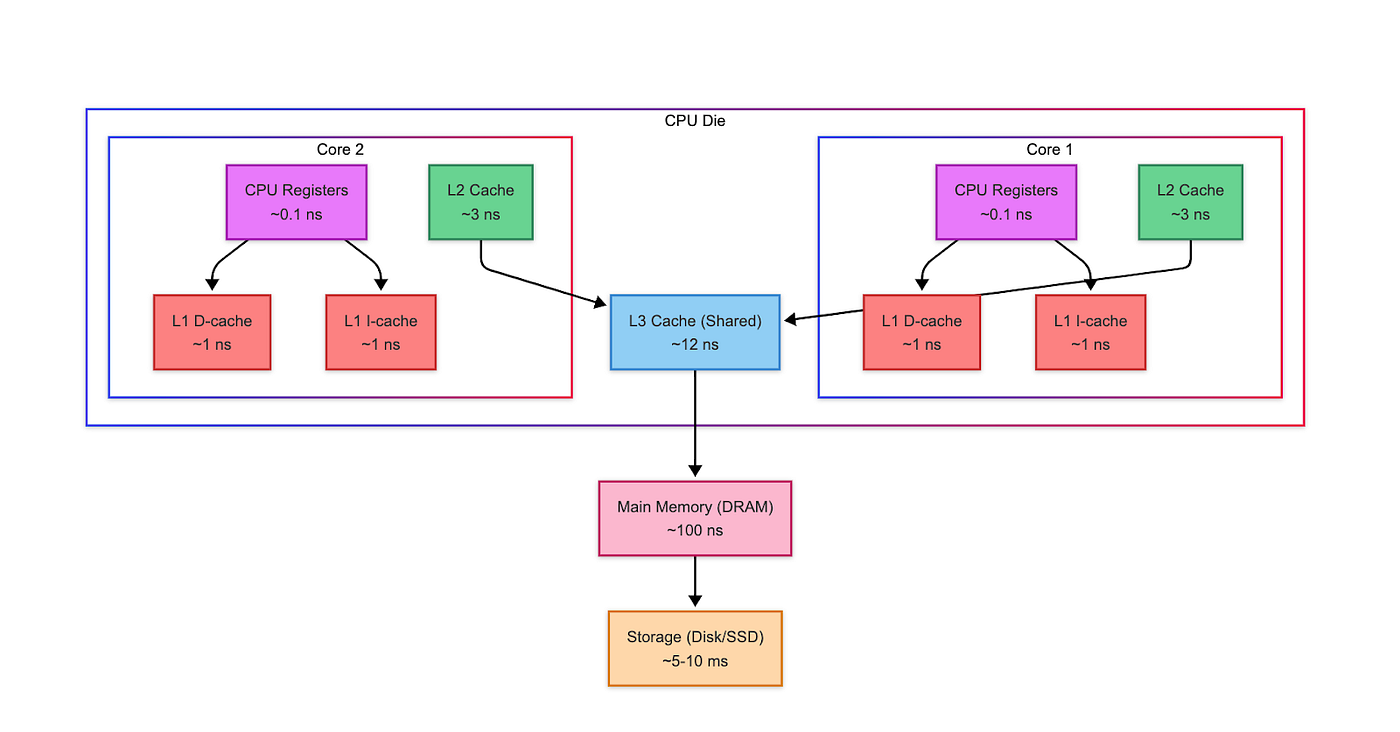

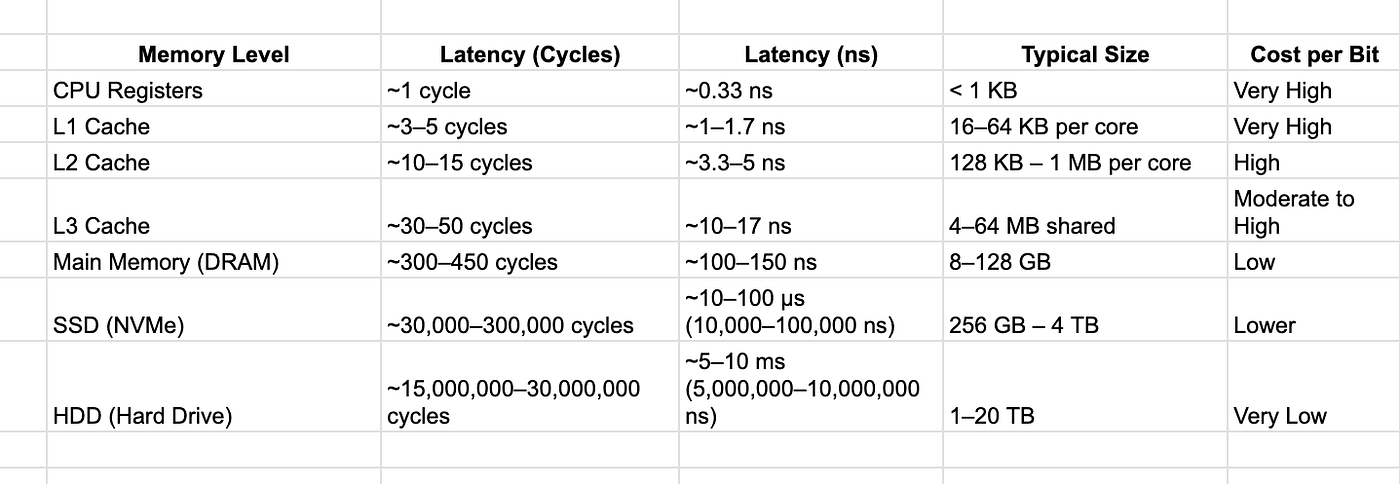

Memory Hierarchy

Problem

Modern multi-core CPUs depend on caches to accelerate memory access and improve performance. However, when multiple cores cache the same memory address, maintaining a consistent view of memory across all cores and main memory (known as cache coherence) becomes a tricky problem.

How CPU handle writes?

Write-Through Caching: A core writes to a cache line, the same update is immediately writtern to main memory.

Write-Back Caching: A core writes to a cache line which only made in the core’s private cache. The new value is written back only when the cache line is evicted or needs to be shared.

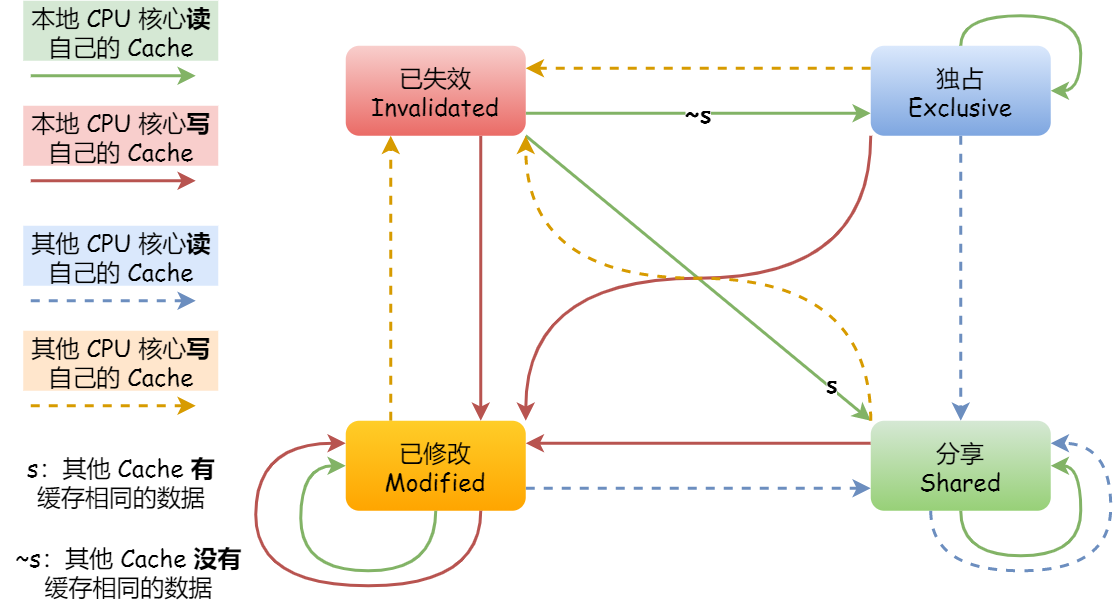

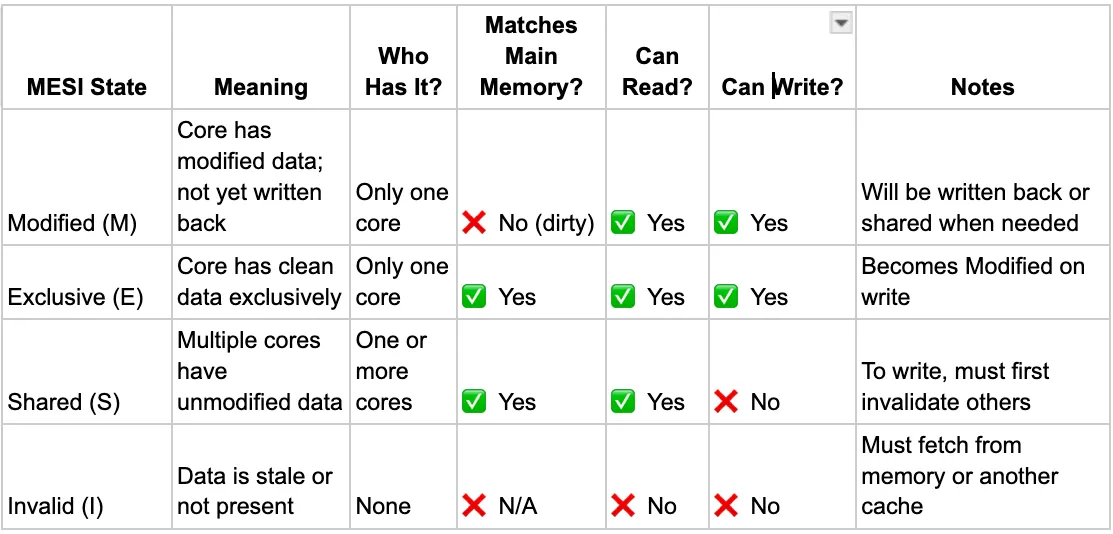

MESI Protocal

Every cache line have four states: M E S I.

Exclusive(E):

The core have the data in it’s private cache.

- write -> [M]

- Be written -> [I]

- Other read -> [S]

Shared(S):

One or more cores have a copy of the latest version of the data in their caches.

- Write -> [M]

- Other write -> [I]

Modified(M):

The core have the latest version of the data.

- Other write -> [I]

Invalidated(I)

Another core have the latest version of the data.

Write -> [M]

Read -> [S]/[I]

How caches communicate

Bus snooping

Bus snooping is a hardware technique where each core monitors the shared system bus to keep an eye on what other cores are doing with memory.

- Every time a core reads or writes to a memory address, that action is broadcast on the system bus.

- Other cores snoop (listen) to the bus.

- If another core has a copy of the requested data, it can:

- Respond with the most recent version (in Modified or Exclusive state).

- Invalidate or update its own cached copy if needed.

- Trigger a state change in its MESI cache line.

Cache-to-Cache Thansfer

When a core issues a memory read request, and another core already has the most recent copy of the requested data in its cache, it can respond directly → this is called a cache-to-cache transfer.

Instead of fetching the data from main memory, the owning core:

- Snoops the request via the bus,

- Recognizes that it holds the latest copy, and

- Sends the data directly to the requesting core.

Real scenorio

Bus signals

BusRd (Bus Read)

BusRdX (Read For Ownership) [I] -> [M]

BusUpgr (Bus Upgrade) [S] -> [M]

Flush (Write Back)

FlushOpt (Flush Optimization) C2C

Limitations

- False Sharing → MESI operates at the cache line granularity, not variable granularity. That means even if two threads access different variables, if those variables fall on the same cache line:

- MESI treats them as shared data.

- This causes unnecessary invalidations, even though no real data conflict exists.

- Scalability Issues → MESI relies on bus snooping, where all cores must snoop every memory transaction:

- As the number of cores increases, the snooping traffic grows rapidly.

- More cores mean more invalidations, more broadcasts, and more bus congestion.

Latency on Writes → To write to a cache line that’s shared, a core must broadcast a write intent, wait for other cores to invalidate their copies, then perform the write. This adds latency, especially when multiple cores frequently access the same data, or when contention is high.

No Built-in Support for Synchronization → MESI doesn’t handle higher-level synchronization (like locks or barriers). It only ensures data coherence, not program correctness.

Optimization

Protocol Opt

- AMD MOESI (Owned state)

- Intel MESIF (Forward state)

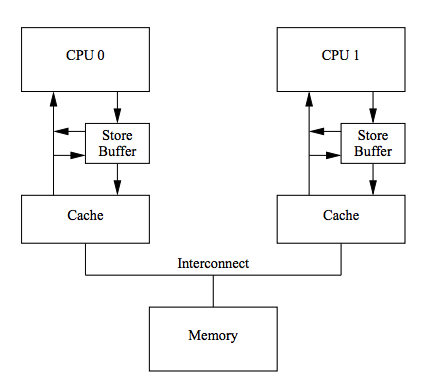

Store Buffers + Store Forwarding

Problem: write operations have to wait for a ack, which is a unnecessary stall for CPU.

Store buffers: write to store buffer and do another things, until receive response. Store buffer may lead to program order, Store forwarding can load data from store buffer directly.

Limitation:memory inconsistent -> memory barrier

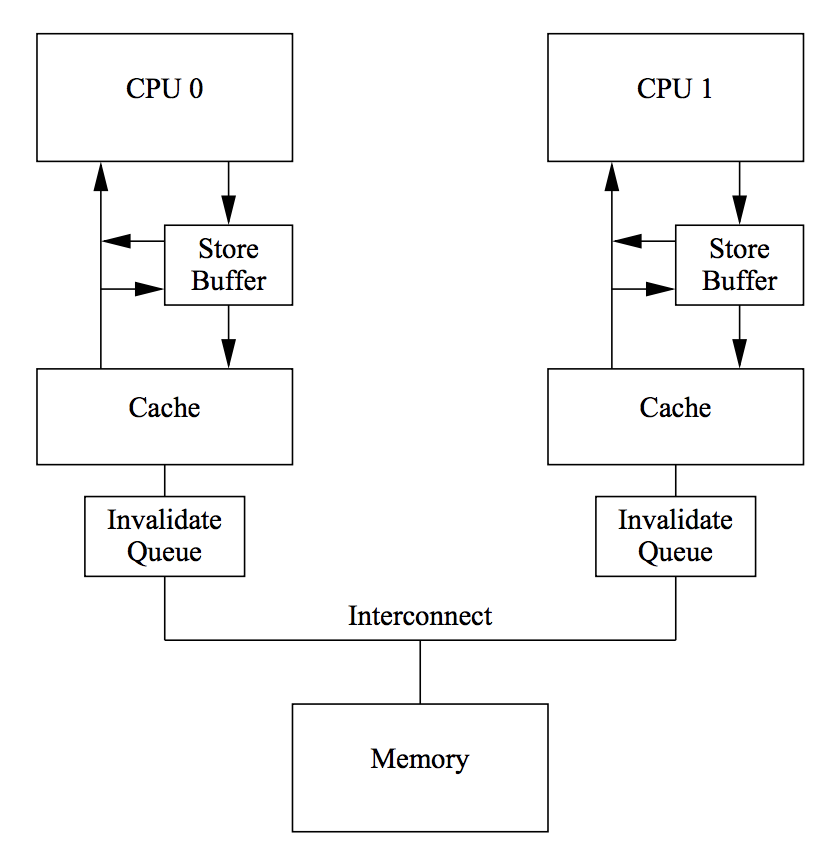

Invalid Queue

Problem: The size of store buffer is limited, core B is busy to handle invalid ACK, core A will still blocked to wait invalid ACK.

Invalid Queue: Core B(the busy bus) will response ACK immediately and push it into invalid queue and handle it later.

Limitation:memory inconsistent

Link

https://xiaolincoding.com/os/1_hardware/cpu_mesi.html#%E6%80%BB%E7%BB%93

https://wudaijun.com/2019/04/cache-coherence-and-memory-consistency/